MiB and GiB vs. MB and GB momory size notation – What’s the Difference?

Surely you know the circumstance that if you format your hard disk drive (HDD), its size allegedly will be shrunk in contrast to the manufacturer information labeled on the drive itself.

The reason for is that manufacturer labeled their products using the SI prefixes (kilo, mega, giga, …) and their corresponding symbols (k, M, G, …) which addressed to the powers of 10 or simplified the well-known decimal system.

For example

1 Kilobyte (KB) -> 103 Byte -> 1.000 Byte

1 Megabyte (MB) -> 106 Byte -> 1.000.000 Byte

1 Gigabyte (GB) -> 109 Byte -> 1.000.000.000 Byte

1 Terabyte (TB) -> 1012 Byte -> 1.000.000.000.000 Byte

when using the decimal system.

Units based on powers of 10

This definition is most commonly used for data transfer rates in computer networks, internal bus, hard drive and flash media transfer speeds, and for the capacities of most storage media, particularly hard drives, flash-based storage, and DVDs. It is also consistent with the other uses of the SI prefixes in computing, such as CPU clock speeds or measures of performance.

Source: https://en.wikipedia.org/wiki/Byte#Units_based_on_powers_of_10

Computers in contrast operates with the powers of 2 or simplified the binary system.

The use of the same unit prefixes with two different meanings has caused confusion. Starting around 1998, the International Electrotechnical Commission (IEC) came up with an new set of binary prefixes for powers of 2 (powers of 1024).

The US National Institute of Standards and Technology (NIST) requires that SI prefixes only be used in the decimal sense: kilobyte (KB) and megabyte (MB) denote one thousand bytes and one million bytes respectively (consistent with SI), while new terms such as kibibyte, mebibyte and gibibyte, having the symbols KiB, MiB, and GiB, denote 1024 bytes, 1048576 bytes, and 1073741824 bytes, respectively.

Source: https://en.wikipedia.org/wiki/Binary_prefix

For example

1 Kibibyte (KiB) -> 210 Byte -> 1.024 Byte

1 Mebibyte (MiB) -> 220 Byte -> 1.048.576 Byte

1 Gibibyte (GiB) -> 230 Byte -> 1.073.741.824 Byte

1 Tebibyte (TiB) -> 240 Byte -> 1.099.511.627.776 Byte

when using the binary system.

Units based on powers of 2

The JEDEC convention is prominently used by the Microsoft Windows operating system, and random-access memory capacity, such as main memory and CPU cache size, and in marketing and billing by telecommunication companies, such as Vodafone, AT&T, Orange and Telstra.

Source: https://en.wikipedia.org/wiki/Byte#Units_based_on_powers_of_2

.

Coming back to the beginning regarding the hard disk drive (HDD) size, which shrinks allegedly after formatting it, which surely depends on the point of view and used unit prefix (SI prefix or binary prefix) it is labeled.

In case we had a hard disk drive (HDD) with a size of exactly 1 TB (1000 GB) labeled in decimal notation (SI prefix) from the manufacturer, and we will format this drive, the size shrinks to 931 GB (actually GiB) displayed in the operating system, as computers operates with the binary system and therefore uses multiples based on powers of 1024 MiB instead only 1000 MiB for the 1000 GB HDD.

You can calculate this as follows.

SI prefix

1 Gigabyte = 109 bytes

1 Gigabyte = 1,000,000,000 bytes

binary prefix

1 Gigibyte = 230 bytes

1 Gigibyte = 1,073,741,824 bytes

x GB = x / 1 Gigibyte (1,073,741,824 bytes)

1000 GB = 1000 / 1,073741824 = 931.3225746154784 GiB

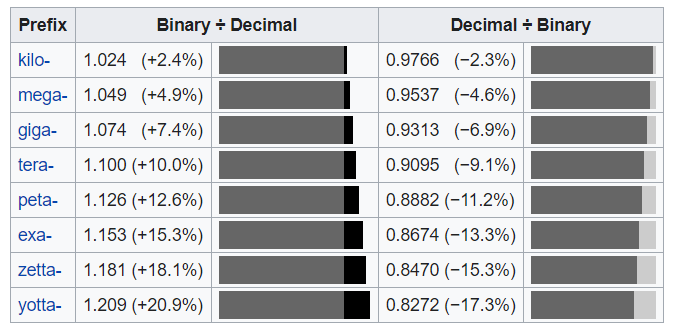

Deviation between powers of 1024 and powers of 1000

https://en.wikipedia.org/wiki/Binary_prefix#Inconsistent_use_of_units

Computer storage has become cheaper per unit and thereby larger, by many orders of magnitude since “K” was first used to mean 1024. Because both the SI and “binary” meanings of kilo, mega, etc., are based on powers of 1000 or 1024 rather than simple multiples, the difference between 1M “binary” and 1M “decimal” is proportionally larger than that between 1K “binary” and 1k “decimal,” and so on up the scale. The relative difference between the values in the binary and decimal interpretations increases, when using the SI prefixes as the base, from 2.4% for kilo to nearly 21% for the yotta prefix.

Byte

The byte is a unit of digital information that most commonly consists of eight bits. Historically, the byte was the number of bits used to encode a single character of text in a computer and for this reason it is the smallest addressable unit of memory in many computer architectures.

To disambiguate arbitrarily sized bytes from the common 8-bit definition, network protocol documents such as The Internet Protocol (RFC 791) refer to an 8-bit byte as an octet.

The octet is a unit of digital information in computing and telecommunications that consists of eight bits. The term is often used when the term byte might be ambiguous, as the byte has historically been used for storage units of a variety of sizes.

Source: https://en.wikipedia.org/wiki/Byte

As an example an IPv4 address uses a 32-bit address space and is often written in dot-decimal notation which consists of four octets (4 bytes).

Links

Byte

https://en.wikipedia.org/wiki/Byte

Unit prefix

https://en.wikipedia.org/wiki/Unit_prefix

SI prefixes

https://en.wikipedia.org/wiki/Metric_prefix

Binary prefix

https://en.wikipedia.org/wiki/Binary_prefix

Metric prefix

https://en.wikipedia.org/wiki/Metric_prefix

Tags In

Related Posts

Latest posts

Modern Azure Deployments with Terraform & GitHub – Part 3 – Automating Terraform CI/CD Workflows with GitHub Actions

Follow me on LinkedIn